Introduction

LLM Stack is a platform that turns your LLM prompts into production-ready APIs, handling all the infrastructure, monitoring, and scaling while letting you focus on building AI features.

Our goal is to bring stability and reliability to the ever changing world of AI advancements. Provide consistent, scalable and easy to use APIs directly out of the box. Just plug in your LLM prompt and get started.

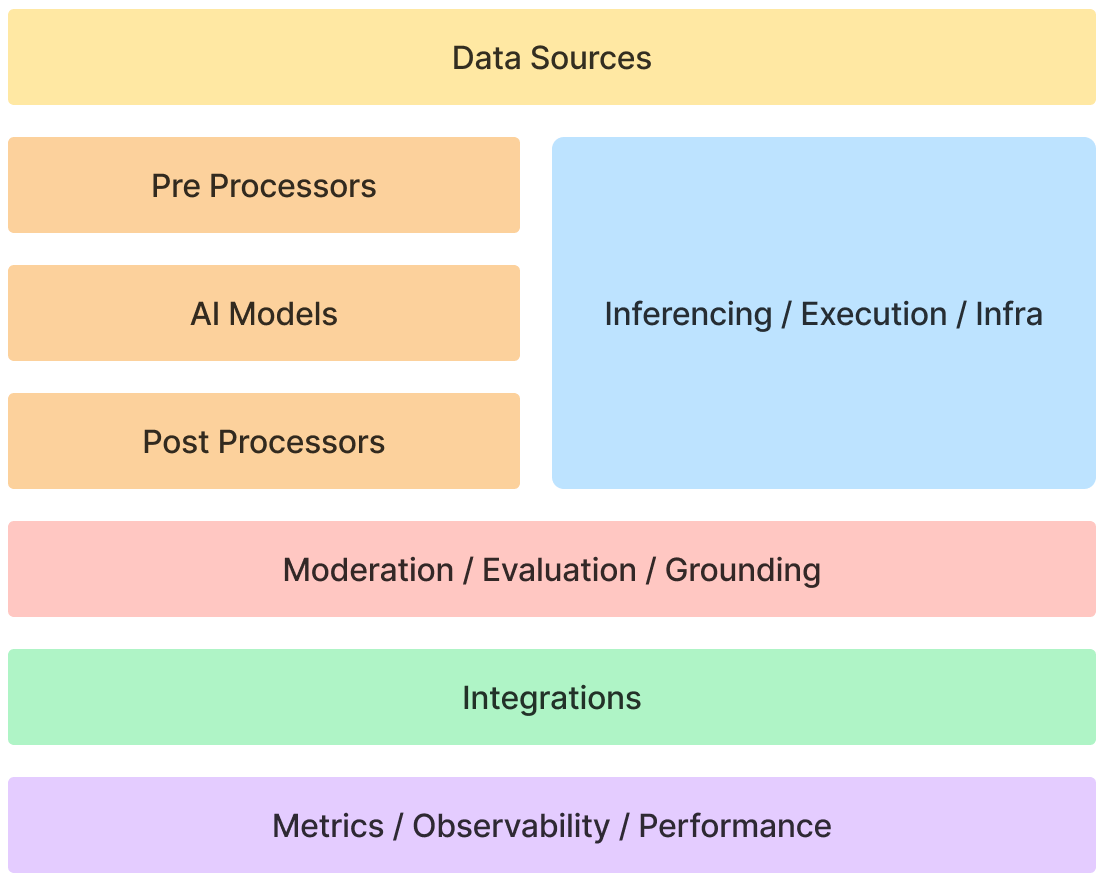

Given above is a high-level overview of the LLM Stack architecture. We have defined the above set of core building blocks needed to build production-ready AI applications. These building blocks are interoperable components with a broad set of Service Providers providing their integrations within LLM Stack.

Build with LLM Stack

🚀 Deploy in 5 minutes - Skip weeks of infrastructure setup

💰 Single dashboard for all LLMs - Monitor costs across multiple model providers

🔍 Debug with context - Stop digging through logs to find what broke

🔄 Built-in vector store - No need to build your own context system

⚡ Instant API changes - Test new prompts without code deploys

We are constantly working with developers to improve the platform and make it easier to build AI applications. We invite feedback or questions, mail us at hi@llmstack.dev.

Build AI apps faster & Ship with confidence.